Teaching a Smol Model to Write SQL Queries

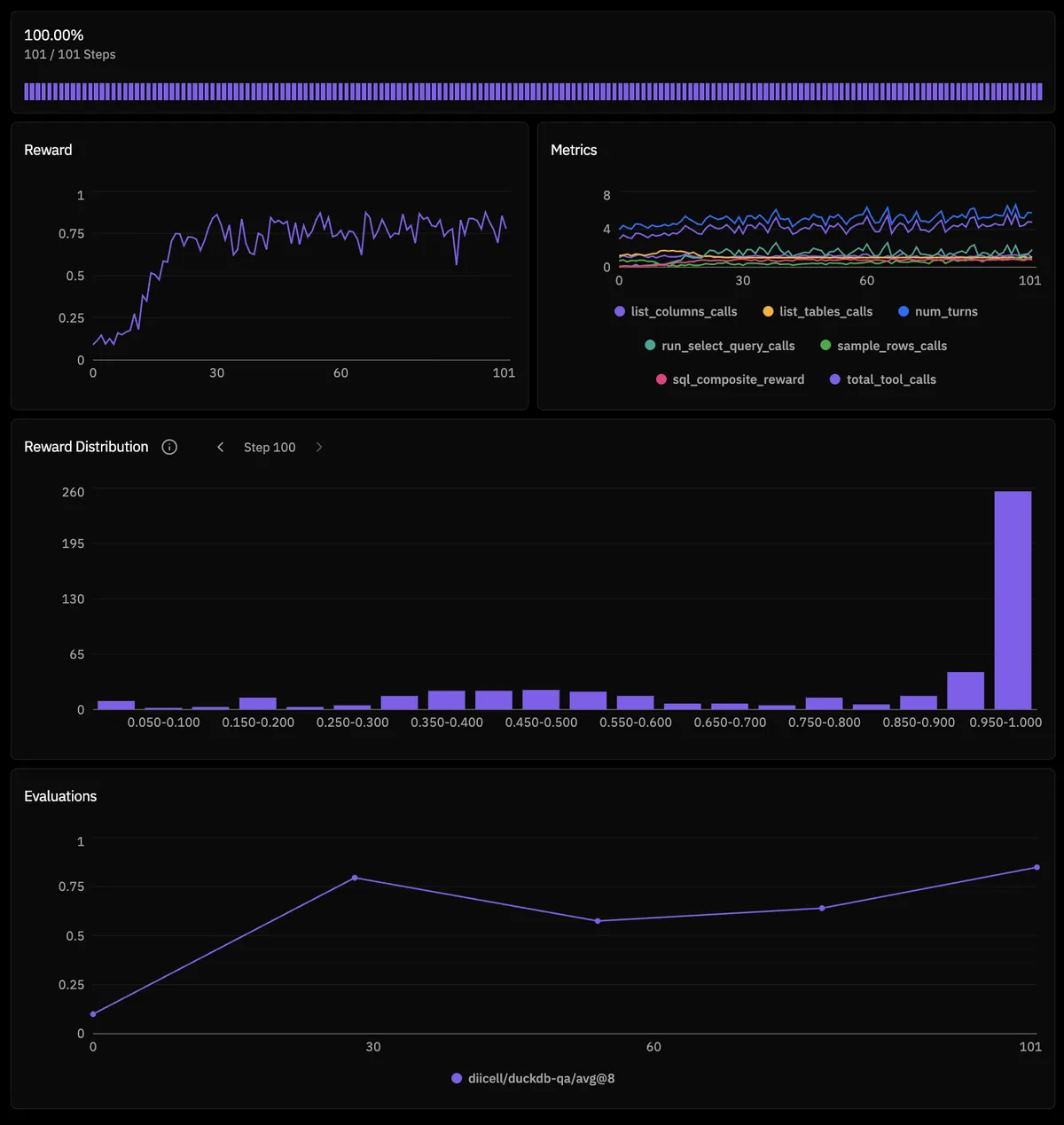

INLAND EMPIRE [Trivial: Success] — You see a reward curve going up. Your neurons fire. The primal instinct of a chart-watcher awakens — years of staring at candlesticks on TradingView have permanently rewired your dopamine system. Green line go up means good. You don't need to understand why. You just need more of it.

When I first saw RL reward curves on the TL with a clean upward trend my neurons got activated. But at the moment I was thinking that this is something I can't do, it will require me a lot of work and brain power. I wasn't familiar with the techniques and terminology in reinforcement learning, my previous experiences with training models were small SFT runs with knowledge distillation on telegram posts sentiment classifications, where I would teach a small model to reason and then provide a structured JSON classification output. And there the main curve is training loss and should go down, which is not so attractive compared to RL reward curve going up (maybe my years of degenerate looking at TradingView charts fried my brain into this). So I had zero knowledge in how and what do I need to train a model with RL, like what is the right batch size, what is rollout in this context, what is policy, what is GRPO and how it works, what is the rubric, reward funcs, verifiable and non-verifiable tasks etc.

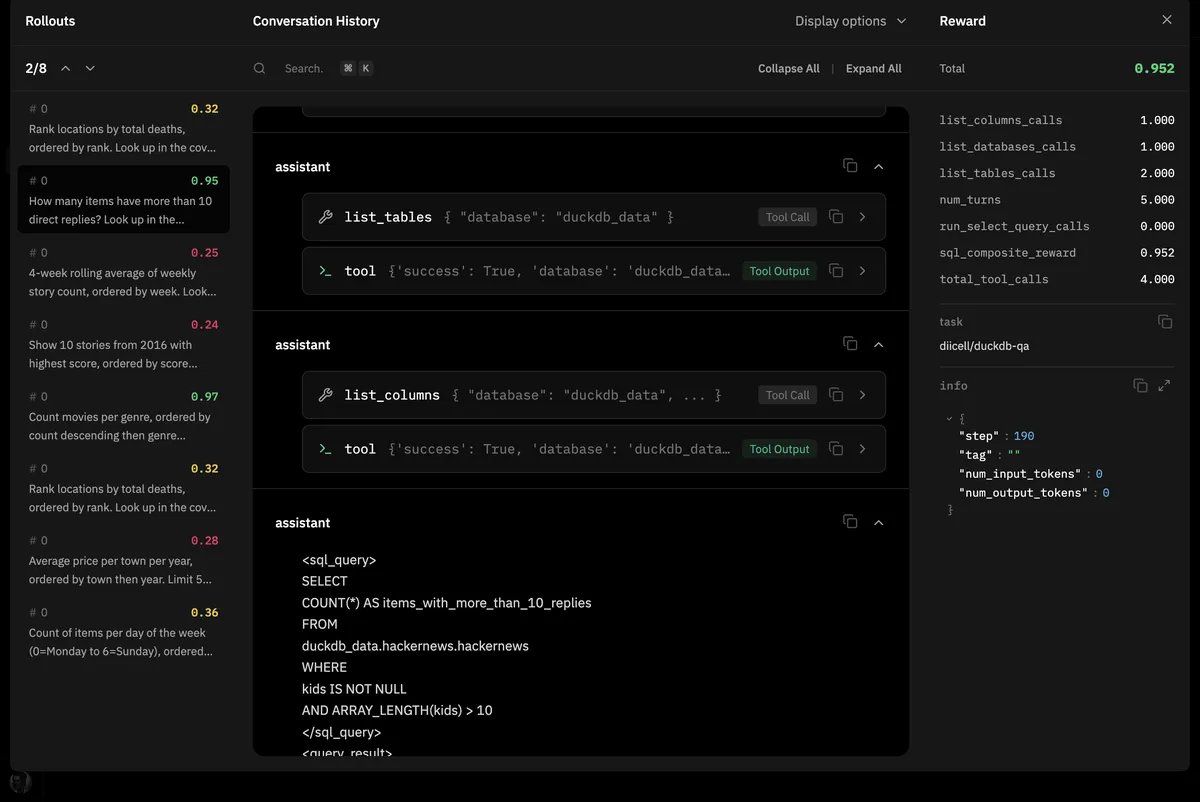

ENCYCLOPEDIA [Easy: Success] — A glossary for the uninitiated, since this field speaks in acronyms and borrowed metaphors. Rollout: one complete attempt by the model to solve a problem — the full conversation from system prompt to final answer, like a student's entire exam paper. GRPO (Group Relative Policy Optimization): an RL algorithm that generates multiple rollouts per problem, scores them all, then updates the model to produce more of the high-scoring ones and fewer of the low-scoring ones. No separate critic model needed — the group IS the critic. Rubric: the collection of reward functions that grade each rollout, your automated teaching assistant. Reward function: the numerical score (0.0 to 1.0) that tells the model how well it did. Everything downstream depends on this single number.

Lucky for me Will Brown made a library called verifiers. And in summer 2025 I started to tinker with RL. At the beginning the verifiers framework was very experimental and few people knew about it, but it was v well made in the manner that even a complete profan like me could look at the example RL env, tweak a few things and start a training run. I've tinkered for a few weeks, didn't get pleasant results and put my RL bizarre adventure on pause. But the verifiers grew stronger every day and in fall 2025 Prime Intellect announces its RL residency program where u could submit your RL env idea and if it is interesting RL env they can give u compute credits to tinker and run experiments. I did submit my idea of data analytics/engineering text2sql RL env and went on holiday to Japan. And when I came back the good news arrived at my mailbox, my idea was approved by Prime Intellect and I received compute credits, wow I thought it is incredible.

But I had zero tools and resources (experience in creating RL env and dataset) to make this RL env come to life. So this is a story of how I went from knowing nothing about RL training to getting a 4B model to correctly answer 84.8% of held-out SQL questions. The environment is available here.

The Wrong Database

HALF LIGHT [Medium: Success] — Your gut tells you: just use ClickHouse. You use it at work. You know the syntax. It'll be easy. Something twitches in the back of your skull. A warning. Your gut is wrong. Your gut has been wrong about many things. But you're already reaching for the keyboard...

So I started gathering dataset. My db of choice was ClickHouse, cause we're using ClickHouse heavily at work and I wanted to make this RL env applicable at work right away. Luckily for me ClickHouse is dev oriented and they have a ClickHouse playground (a cloud version of ClickHouse where u can practice SQL skills and ClickHouse knowledge) with a good amount of text2sql examples. And they had a dedicated github repo with those questions already in a JSON file. And the ClickHouse playground dbs are based on open datasets which makes it much easier to replicate the db env. I chose 4 dbs — IMDB (movies), COVID (epidemiology), HackerNews (tech posts), and UK (regional property data) — that covers most of the SQL tasks. Picked a 100 questions related to those dbs.

And again verifiers repo had an MCP example and my first thoughts were hehe okay I can use this MCP example, replace the MCP config with ClickHouse MCP, replace dataset with mine and call it a day, wow v nice.

But this didn't work out.

The Playground Limit

My initial plan was to use the ClickHouse playground directly — the verifiers repo had an MCP example and I figured I'd just point it at the playground's API. But the playground has a limit of 200 requests per hour, which in RL training is sand in flip flops. One batch of rollouts would blow through that in seconds.

The chDB MCP

LOGIC [Medium: Success] — If the playground won't take your calls, bring the database home. chDB is ClickHouse but embedded — runs in-process, same SQL dialect, no server needed. Replicate the playground data locally and you're free from rate limits. Elegant in theory.

Okay so I replicated the playground data into chDB — a ClickHouse embedded version. Built a small version with all 4 dbs, wired it up through an MCP server. It worked. But the MCP toolset was limited — basically just run_select_query. Small models need scaffolding tools to explore the schema before writing queries. Things like list_tables, list_columns, sample_rows. Without those the model is writing SQL blind.

The Docker Idea

The thought was — maybe I should run a full ClickHouse server in Docker. Real ClickHouse has proper tooling, the ClickHouse MCP server has a richer set of tools, and Docker would handle the connection management. Set it up locally and it ran fine. But when I tried to deploy it through prime_sandboxes (managed containers for Prime Intellect's infra), ClickHouse inside the sandbox would only bind to 127.0.0.1, rejecting external connections.

DRAMA [Medium: Success] — And here you are, elbow-deep in container networking configs, port binding, listen_host XML injection. The audience is getting restless. They came to see a model learn SQL, not a man wrestle with YAML files. Even you're getting bored of your own subplot.

I wrote a fix injecting <listen_host>0.0.0.0</listen_host> into the ClickHouse config on startup. But by this point I was losing faith in the whole approach.

The ToolEnv Idea

COMPOSURE [Challenging: Failure] — Okay. Forget MCP. Forget Docker. Forget sandboxes. What if you just write a ClickHouse ToolEnv directly — Python functions wrapping chDB queries, no subprocess, no networking? Back to basics. Surely this will work.

So I went back to chDB but this time as a direct ToolEnv — no MCP layer, just Python functions calling chDB. And here's where the original sin caught up with me: chDB allows only one connection per session. prime-rl forks worker processes, each child inherits the parent's file descriptors but cannot share the chDB session. The database became a contested resource.

_session_cache: Dict[int, chs.Session] = {}

def get_chdb_session() -> chs.Session:

pid = os.getpid()

if pid not in _session_cache:

_session_cache[pid] = chs.Session(SESSION_DIR)

return _session_cache[pid]

The singleton pattern helped locally but the fundamental limitation remained — chDB wasn't built for this.

VOLITION [Legendary: Success] — Stop. Just — stop. You've spent a week wrestling with ClickHouse in every form — playground, embedded, containerized, MCP-wrapped, ToolEnv-wrapped. The actual model hasn't learned a single useful thing about SQL. You're building a Rube Goldberg machine to deliver a pizza next door. Walk next door. Your willpower is finite and you're spending it on plumbing.

After a week the calculation was clear: DuckDB. Embedded. No server, no Docker, no networking, no MCP. It runs in-process, handles multiple connections without session problems. Same analytical SQL capabilities. The new env used verifiers' native ToolEnv with Python functions as tools. No subprocess management. No port binding. Just Python. I recompiled the same 4 dbs in DuckDB, rewrote questions and synthetically generated answers using DeepSeek V3.

Now the model could actually start learning.

The Reward Function

SHIVERS [Heroic: Success] — The reward function is not a grading rubric. It is the entire learning signal. It is the universe your model inhabits. Every decimal point in its output reshapes the model's soul. Get it wrong and the model learns to game it. Get it right and you get genuine intelligence. There is no middle ground.

ENCYCLOPEDIA [Medium: Success] — In RL training, the reward function is the ONLY teacher. There's no human labeler correcting mistakes, no training loss pointing at the right answer. The model generates multiple rollouts, each gets a numerical score, and GRPO uses the relative differences between those scores to update weights. If the reward function gives high scores for bad behavior, the model learns bad behavior. If it gives the same score for everything, the model learns nothing at all. The reward function IS the curriculum.

This is where the real work began. The reward function went through five distinct designs. Each one fixed a problem and revealed the next one hiding behind it.

The Binary Cliff

When I switched to DuckDB, the first reward was the simplest thing: hash the result table, compare to the gold hash. Match = 1.0, no match = 0.0. This is what a lot of text-to-sql RL environments use — binary execution accuracy. Did the query return the right rows? Yes or no. It makes intuitive sense — if the output is right, it's right.

LOGIC [Medium: Success] — Intuitive, sure. But consider what this looks like to a 4B model. A query returning

[(1,), (2,), (3,)]when gold was[(1,), (2,), (4,)]gets the same 0.0 as a query returning total garbage. From GRPO's perspective, being 99% right is indistinguishable from being 100% wrong. If all 16 rollouts in a batch get 0.0, the advantage is zero everywhere. No gradient.

ENCYCLOPEDIA [Medium: Success] — This is "advantage collapse." GRPO computes advantage by comparing each rollout's reward against the group mean. If all rollouts score the same, every advantage is zero. Zero advantage means zero gradient means no learning. Larger models can brute-force their way past this — they're capable enough that some rollouts land on the exact right answer, creating the variance GRPO needs. A 4B model on hard SQL questions? Almost never gets the exact answer by chance. Binary rewards create a desert of zero signal for the questions that matter most.

The model quickly learned the easy questions — the ones where a 4B model can brute-force the right answer — and reward climbed to ~0.44. Then it hit a wall. On harder questions, all 16 rollouts scored 0.0. Every single one. GRPO saw no difference between a query that was one column off and a query that was pure nonsense. The model had no way to improve on the questions it couldn't already solve perfectly. Stuck at 44% forever.

Partial Credit

So I added continuous scoring. Jaccard similarity on column names, F1 on result rows. Now being 80% right scored higher than being 10% right.

ENCYCLOPEDIA [Easy: Success] — Jaccard similarity: |A ∩ B| / |A ∪ B|. If predicted columns are {name, age, city} and gold is {name, age, score}, Jaccard = 2/4 = 0.5. Row F1 balances precision and recall on result rows. Continuous scores between 0.0 and 1.0 instead of the binary cliff.

This helped — the landscape had a gradient now and the model was actually learning, not just hacking. But a new ceiling appeared around ~0.45-0.50. This time it wasn't reward hacking — the model was genuinely trying to write correct SQL. The problem was subtler: with 16 rollouts per question all scoring between 0.42 and 0.52, GRPO's advantages were basically noise. Every rollout was similarly mediocre. The gradient signal was there but too weak to push past the plateau.

SAVOIR FAIRE [Medium: Success] — A different kind of stuck. Before, the model was gaming the system. Now it's genuinely trying and genuinely failing — all 16 attempts landing in the same mediocre band. The algorithm can't tell a 0.44 rollout apart from a 0.48 one. It's like grading exams where everyone gets a C+. How do you teach when nobody is notably good or notably bad?

The partial credit ceiling was also too generous — the max reward for wrong-but-plausible SQL was ~0.467. The gap between "close enough" and "exact match" wasn't wide enough to motivate the final push.

Standing on the Shoulders of Giants

ENCYCLOPEDIA [Difficult: Success] — The Reasoning-SQL paper describes a composite reward for text-to-sql RL: execution accuracy (weight 3), LLM-as-a-judge / RLAIF (weight 2), n-gram similarity (weight 1), schema linking (weight 1), syntax (weight 1), format (weight 1). Their execution accuracy is binary — they explicitly note "the binary and sparse nature of it would create challenges for RL optimization." The paper's key contribution is the decomposition into multiple weighted reward components and the use of an LLM judge (RLAIF) for edge cases. The n-gram component, however, rewards surface-form mimicry — SQL that looks like the gold query rather than SQL that produces correct results. A dubious signal at best.

After reading the Reasoning-SQL paper, the path forward was clear. I took their multi-component approach but replaced binary execution with a continuous soft score. Max reward for wrong-but-plausible SQL dropped from 0.467 to 0.250. The landscape became honest — partial credit exists but isn't comfortable.

| Query Type | Binary | Partial Credit | Soft Execution |

|---|---|---|---|

| Empty/format only | 0.0 | 0.071 | 0.071 |

| Valid but wrong | 0.0 | 0.467 | 0.250 |

| Partially correct | 0.0 | 0.55 | 0.65 |

| Exact match | 1.0 | 1.0 | 1.0 |

The Model Finds a New Exploit

PERCEPTION [Medium: Success] — Wait. Something's off. Look at the rollouts carefully. The model is... not calling run_select_query. It writes syntactically correct SQL with good schema coverage, collects ~0.4 from format + syntax + schema, and never actually runs the query. Why risk a tool call that might fail when you can get 40% reward for free? You almost admire the efficiency of it.

Every exploit I closed, the model found another. This time: skip run_select_query entirely. Write plausible SQL, collect partial credits, never actually execute anything. The fix was a multiplicative penalty on the entire reward:

tool_multiplier = 1.0 if used_run_select_query else 0.15

reward = base_reward * tool_multiplier

INTERFACING [Medium: Success] — Additive bonuses are suggestions. Multiplicative penalties are laws. The model can shrug off a +0.1 bonus — it's pocket change. It cannot shrug off a 0.15x multiplier — that's having your entire paycheck docked. Remember this distinction.

A 0.15x multiplier made skipping execution catastrophic: 0.4 reward became 0.06. You cannot opt out of running queries anymore.

The Final Shape

The final reward is a composite of everything I learned:

W_SOFT_EXEC = 5.0

W_SYNTAX = 1.0

W_SCHEMA = 0.5

W_FORMAT = 0.5

best_f1 = max(row_f1, value_f1)

sharpened_f1 = best_f1 ** 1.5

near_bonus = 0.15 if best_f1 >= 0.95 else 0.0

tool_multiplier = 1.0 if used_run_select_query else 0.15

reasoning_multiplier = _reasoning_quality(completion) # 0.4 to 1.0

exploration_bonus = 0.08 if _has_exploration(completion) else 0.0

self_correction = 0.05 if _has_self_correction(completion) else 0.0

error_recovery = 0.08 if _has_error_recovery(completion) else 0.0

completion_bonus = 0.03 if _ends_with_query(completion) else 0.0

total = (W_SOFT_EXEC * r_soft_exec + W_SYNTAX * r_syntax

+ W_SCHEMA * r_schema + W_FORMAT * r_format)

base = (total / W_TOTAL) * tool_multiplier * reasoning_multiplier

reward = min(1.0, base + exploration_bonus + self_correction

+ error_recovery + completion_bonus)

The LLM judge came from two places: the Reasoning-SQL paper's RLAIF component, and edge cases I kept seeing in rollouts where the model returned correct values but with different column names or row ordering. GPT-4.1-mini fires only when the programmatic metrics disagree — high value F1 but low row F1, suggesting the answer is right but the structure is different. Targeted, not universal. Maybe 20-30% of rollouts.

Poison in the Dataset

PERCEPTION [Hard: Success] — Something is wrong with your gold answers. You can feel it in the oscillation. The reward swings where it should be climbing steadily. You audit the dataset and there it is, hiding in plain sight: 30-40 questions use

LIMITwithoutORDER BY. The gold results are non-deterministic. You've been training on noise this entire time.

Running a 200-step experiment and hitting a plateau at 0.49 prompted me to audit the training data. The gold SQL queries had a silent problem: approximately 30-40 questions used LIMIT without ORDER BY.

-- This returns ARBITRARY rows

SELECT name, score FROM movies LIMIT 5

The gold execution result was itself non-deterministic — different runs would produce different gold results for the same question. From the model's perspective, a correct query could get 0.0 reward one step and 1.0 reward the next.

ENCYCLOPEDIA [Medium: Success] — Non-determinism in gold labels is uniquely poisonous for RL. In supervised learning, noisy labels just slow convergence — the model averages over them. In RL, noisy rewards create contradictory gradient signals. The model takes an action, gets reward 0.8 one time and 0.2 the next. GRPO tries to increase the probability of the 0.8 rollout and decrease the 0.2 — but they're the same action. The gradients cancel. The model oscillates instead of converging. Data quality in RL isn't nice to have — it's load-bearing.

I rewrote all questions with strict determinism: every LIMIT gets ORDER BY with tie-breakers, every aggregation produces a single deterministic result, DuckDB syntax throughout. But fixing the noise wasn't enough — the dataset had a size problem too. With only ~100 questions and batch_size=256, the model was seeing almost the entire dataset every batch. The easy questions saturated within a few steps and contributed zero gradient. The learning frontier was the medium and hard questions, but they were diluted in a sea of already-solved easy ones.

So the dataset grew in stages: first fixing the non-deterministic questions, then adding more medium and hard questions for better difficulty balance, and finally a set of surgical hard questions targeting specific failure patterns I kept seeing in rollouts — things like COUNT(*) vs COUNT(DISTINCT) confusion, join-vs-array errors, and filter-then-aggregate ordering mistakes. The final dataset: 578 questions across the 4 schemas, split 491 train / 87 eval. Published as diicell/duckdb-qa-v3.

The Reasoning Breakthrough

CONCEPTUALIZATION [Legendary: Success] — You're staring at eval rollouts from Claude and DeepSeek on the same environment. Same questions, same tools. And these bigger models — they don't just fire tool calls into the void. They talk to themselves. "The data type is BOOLEAN so I need to handle this differently." "My first query failed on GROUP BY, let me rethink." Your 4B model does none of this. It pulls the slot machine lever and hopes.

I was running bigger models — Claude, DeepSeek — through the same DuckDB environment as evaluation baselines. Same questions, same tools, same schema. And I noticed something in their rollouts that the small model never did: they reasoned between tool calls. They'd call list_columns, look at the result, and say "the price column is DECIMAL, I should CAST properly" before writing the query. They'd get an error and say "the GROUP BY is wrong because I'm selecting a non-aggregated column, let me restructure."

My Qwen3-4B was doing none of this. Pure mechanical tool calls. No thinking, no planning. Just firing queries and hoping.

INLAND EMPIRE [Formidable: Success] — The big models reason because they can. The small model doesn't because nobody showed it that thinking is welcome here. It's not a capability gap — it's a permission gap. The 4B has enough parameters to form a thought between tool calls. It just doesn't know it's allowed to.

So I built the encouragement.

The system prompt. Rewrote it to explicitly guide a reasoning workflow — explore the schema, understand data types, plan the query, execute, verify. "Before each tool call, reason about your approach." The same workflow I observed in the bigger models.

The reasoning multiplier. A continuous multiplier from 0.4 to 1.0 on the entire reward, based on how much reasoning the model shows between tool calls. A perfect query with zero reasoning gets 40% of what it deserves. Thinking becomes the economically rational choice.

ELECTROCHEMISTRY [Medium: Success] — The dopamine circuit is elegant. Reason well, get full reward. Don't reason, get 40%. The model doesn't need to understand WHY reasoning helps — it just needs to feel the difference. Pavlov would be proud. Or horrified. Possibly both.

And the reasoning appeared naturally. Not through any special tool or structured thinking mechanism — the model simply started verbalizing its thoughts between tool calls when the system prompt encouraged it and the reward function made it worthwhile. The same way the bigger models do it, just... smaller.

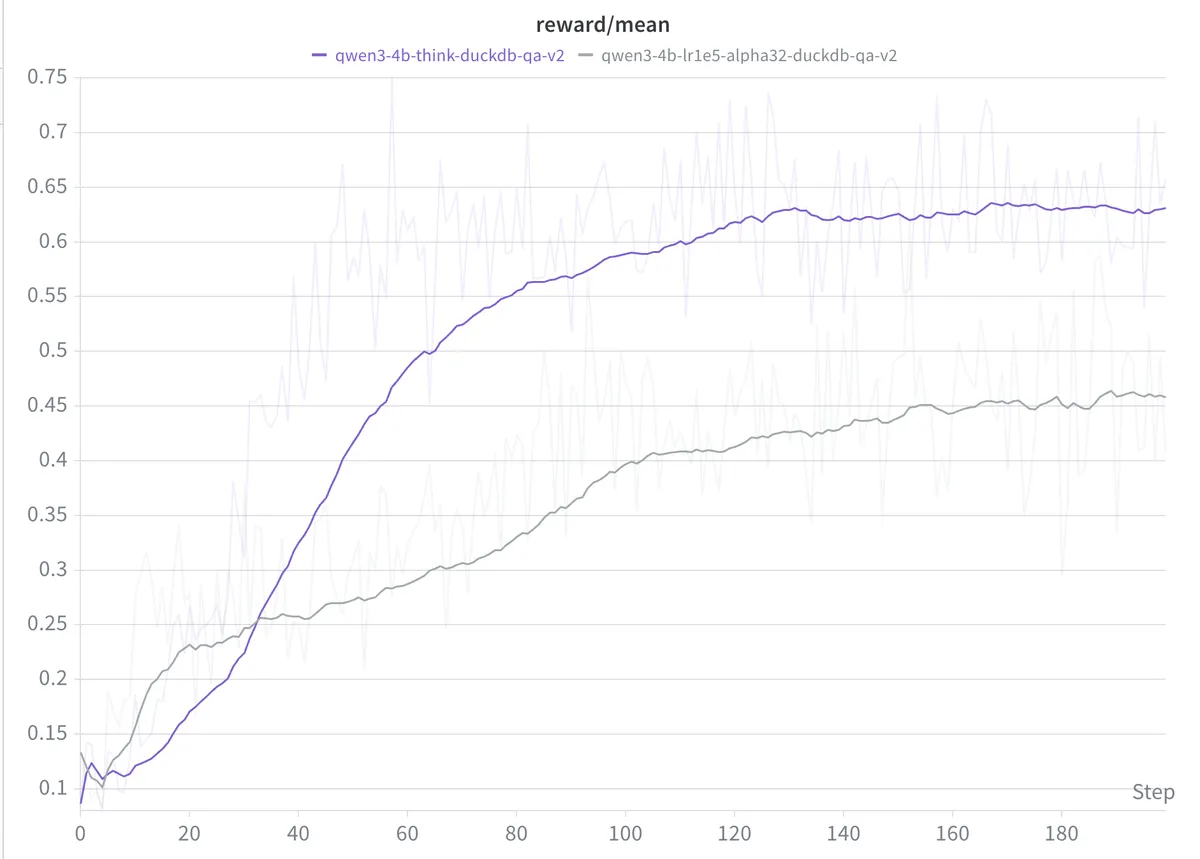

Reward jumped from ~0.44 to ~0.62.

ENCYCLOPEDIA [Challenging: Success] — Why does reasoning help so much? Two mechanisms. First, it gives the model a scratchpad — plan which columns exist, what types they are, whether NULL handling is needed, all before committing to SQL. Second, and perhaps more important for RL: reasoning dramatically increases reward variance across rollouts. Without it, all 16 rollouts produce similar mechanical queries — they converge on the same mediocre strategy. With reasoning, some rollouts reason well and some don't, creating the diversity GRPO needs for meaningful advantages. More variance means stronger gradient signal means faster learning. The reasoning doesn't just help the model think — it helps the training algorithm teach.

And the model didn't just learn to reason before queries — it learned to self-correct. Here's an actual rollout from step 20 of the alpha64 run:

The question asks for movie names with their actors and roles, using string_agg. The model explores the schema, checks imdb.roles and imdb.movies, but assumes imdb.actors has a name column without checking. Fires the query:

string_agg(a.name || ' as ' || r.role, ', ') AS actors_roles

Error: Binder Error: Table "a" does not have a column named "name". Candidate bindings: "gender"

RHETORIC [Medium: Success] — The error message is a gift, not a punishment. "Candidate bindings: gender" — the database is telling you what it HAS, not just what it doesn't. A lesser model would crumble here. Let's see if yours can read between the lines.

The model reasons: "I made an error. The imdb.actors table doesn't have a name column. I need to check the actual columns." Goes back, calls list_columns on imdb.actors, discovers first_name and last_name. Reasons again: "Now I understand. I need to construct the actor name using first_name and last_name." Retries:

string_agg((a.first_name || ' ' || a.last_name) || ' as ' || r.role, ', ') AS actors_roles

Reward: 1.0. The model hit an error, diagnosed it, investigated the schema, reasoned about the fix, and corrected itself — all within one rollout. At step 20. This is the behavior I saw in Claude and DeepSeek, now emerging in a 4B model.

The Ablation

ENCYCLOPEDIA [Medium: Success] — LoRA — Low-Rank Adaptation. Freeze the base model, train small adapter matrices of rank r. Two knobs: alpha scales adapter updates, learning rate scales gradient steps. Effective LR ≈ (alpha / rank) × lr. Hypothesis: same effective LR should give similar results regardless of which knob you turned.

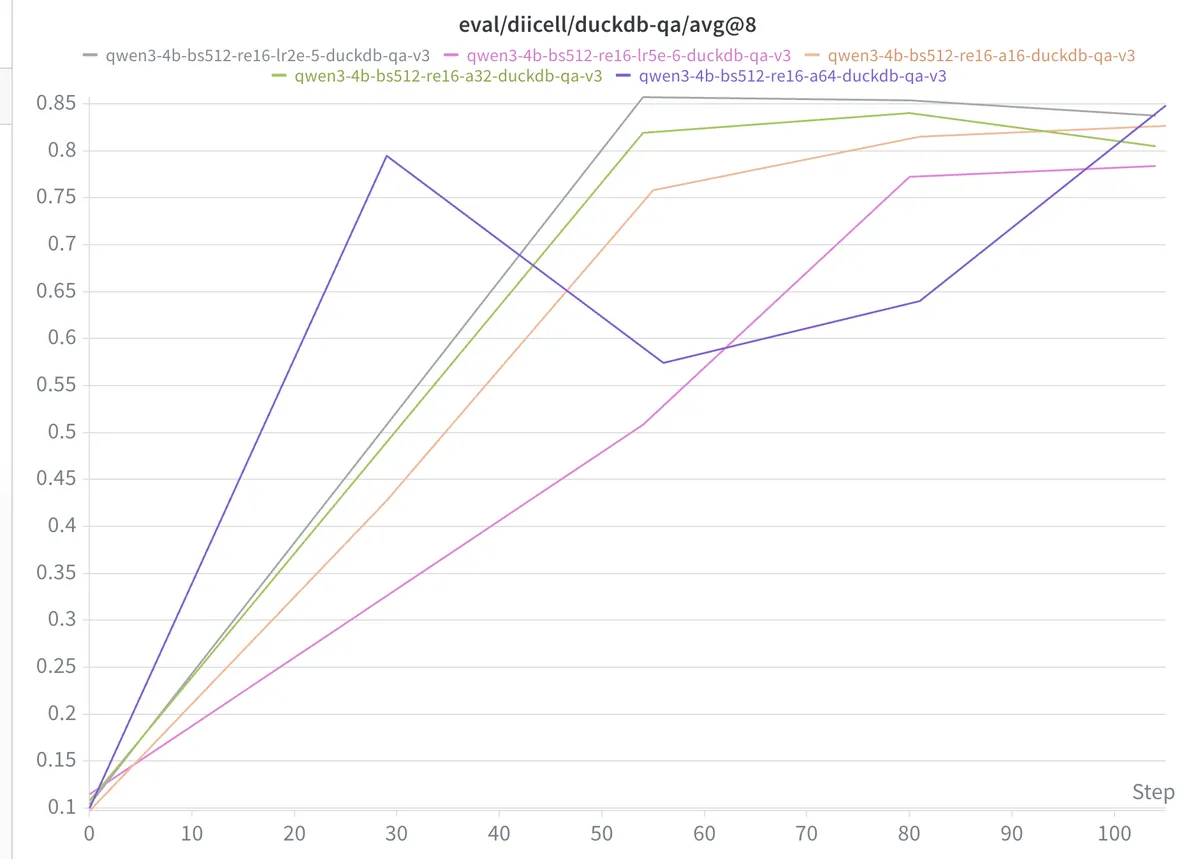

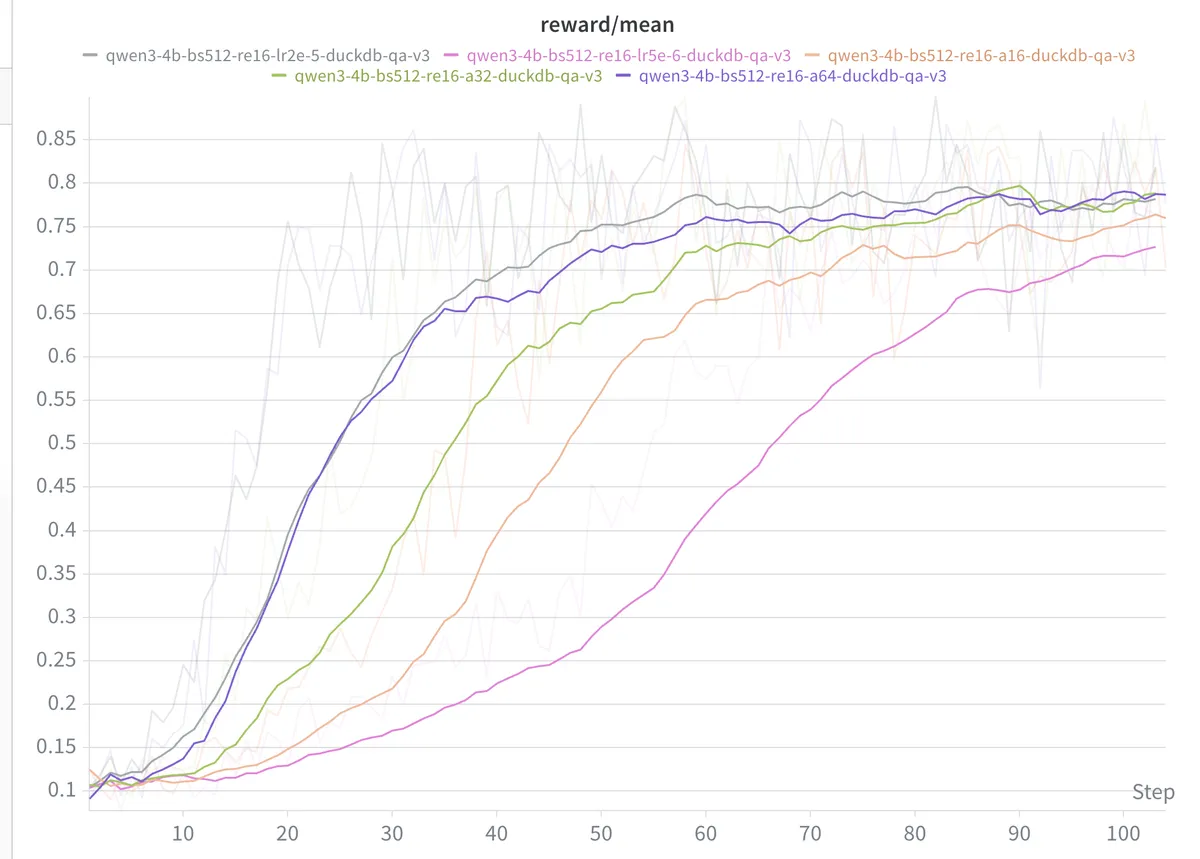

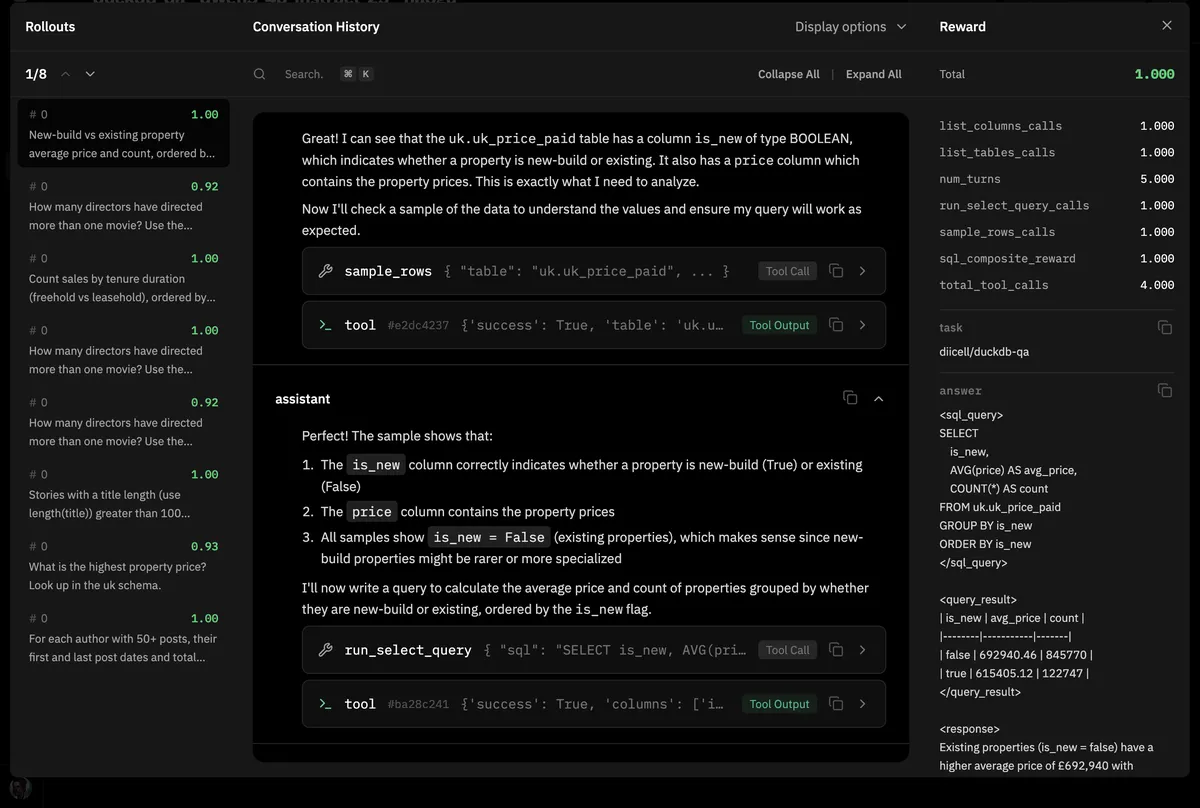

With everything stable — reward function, dataset, reasoning — I ran five configurations to find the right LoRA hyperparameters. All Qwen3-4B-Instruct, GRPO with 16 rollouts per example, batch size 512, 100 steps.

ENCYCLOPEDIA [Medium: Success] — Batch size 512 means 512 rollouts per step. With 16 rollouts per question, that's 32 different questions per step. The key insight: batch size is an amplifier. It doesn't care what signal you feed it. Clean reward + big batch = fast, stable learning. Noisy reward + big batch = fast, stable convergence to garbage. That's why I fixed everything else before scaling up.

| Run | Alpha | LR | Eff. LR | Final avg@8 | Peak avg@8 |

|---|---|---|---|---|---|

| alpha16 | 16 | 1e-5 | ~1e-5 | 0.827 | 0.827 (step 101) |

| alpha32 | 32 | 1e-5 | ~2e-5 | 0.805 | 0.840 (step 78) |

| alpha64 | 64 | 1e-5 | ~4e-5 | 0.848 | 0.848 (step 101) |

| alpha32_lr_5e6 | 32 | 5e-6 | ~1e-5 | 0.784 | 0.784 (step 101) |

| alpha32_lr_2e5 | 32 | 2e-5 | ~4e-5 | 0.838 | 0.857 (step 53) |

ANALYTICAL THOUGHT [Formidable: Success] — The numbers are speaking. alpha16 with lr=1e-5 and alpha32 with lr=5e-6 — same effective LR (~1e-5), scores 0.827 vs 0.784. Same neighborhood. alpha64 with lr=1e-5 and alpha32 with lr=2e-5 — same effective LR (~4e-5), peaks 0.848 vs 0.857. Nearly identical. Effective learning rate is the dominant factor. The individual knobs are illusions.

alpha64 gave the best final score (0.848) and was still improving at step 101 — possibly not yet converged. alpha32_lr_2e5 peaked fastest at 0.857 but dipped after. The train-eval gap was small across all runs, which means the model was genuinely generalizing, not memorizing.

What the Model Learned

VISUAL CALCULUS [Formidable: Success] — Trace the workflow. list_tables → list_columns → sample_rows → reason → query → verify. It's a pattern now, repeated across rollouts with the reliability of a well-rehearsed dance. The model isn't guessing anymore. It's investigating.

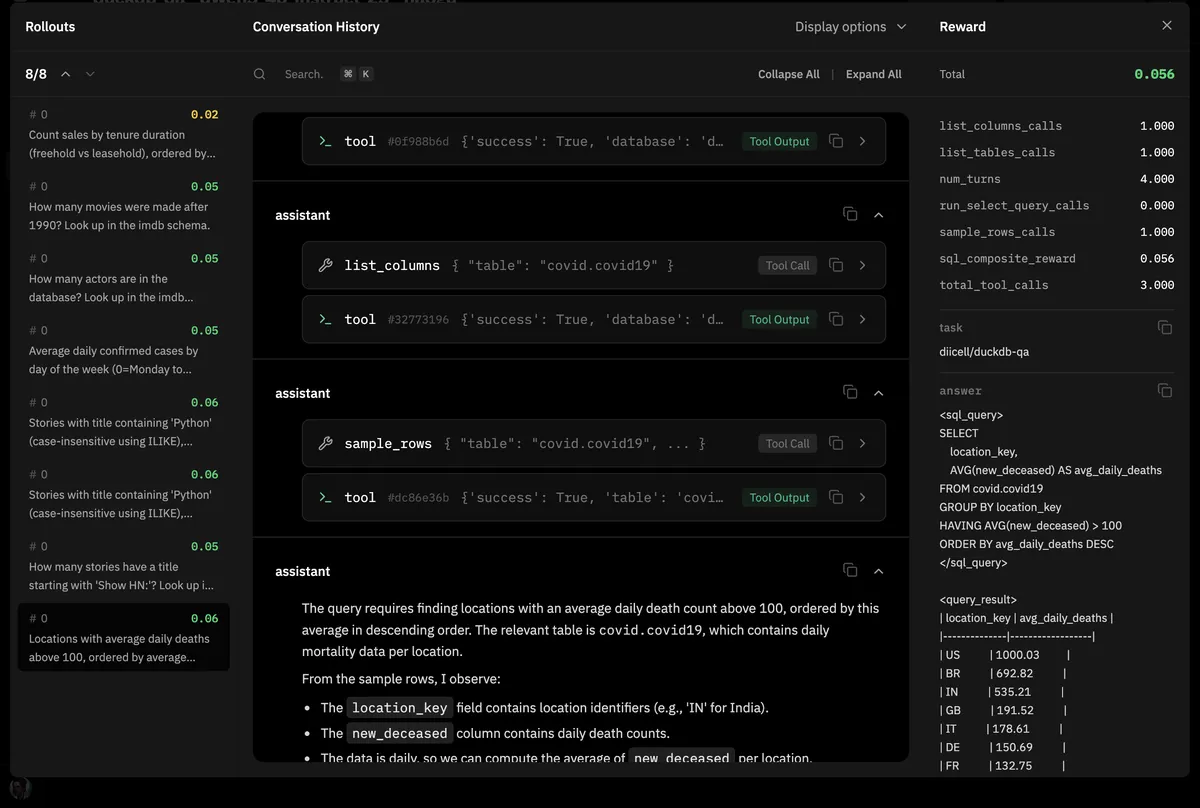

Here's an actual rollout from step 80 of the alpha64 run. Reward: 1.0.

Turn 1: "I need to find the average price and count of properties, distinguishing between new-build and existing properties. Let me start by exploring the tables in the uk schema."

→ calls list_tables

Turn 2: "I see uk.uk_price_paid which is likely to contain property price data. I'll explore the columns to understand its structure."

→ calls list_columns("uk.uk_price_paid")

Turn 3: "The table has a column is_new of type BOOLEAN and a price column. I need to group by is_new and compute avg price and count. Let me check sample data first."

→ calls sample_rows("uk.uk_price_paid")

Turn 4: Reasons about GROUP BY and ORDER BY, writes the query.

→ calls run_select_query

Turn 5: Formats the response with proper XML tags.

Five turns. Schema exploration → data inspection → reasoned construction → execution → formatting. Compare this to step 0 where the model would immediately fire a query with guessed column names, hit an error, and give up.

The Final Environment

The env is a single file with one entry point — load_environment(). Functions defined in a closure that captures the db path, wired together with a rubric, returned as a vf.ToolEnv:

def load_environment(...) -> vf.Environment:

db_path = load_duckdb_data()

async def list_tables() -> dict: ...

async def list_columns(table: str) -> dict: ...

async def sample_rows(table: str, limit: int = 5) -> dict: ...

async def run_select_query(sql: str) -> dict: ...

async def sql_composite_reward(prompt, completion, answer, sql_parser, **kwargs) -> float:

...

rubric = vf.Rubric(parser=sql_parser)

rubric.add_reward_func(sql_composite_reward, weight=1.0)

return vf.ToolEnv(dataset=dataset, eval_dataset=eval_dataset,

system_prompt=system_prompt, rubric=rubric,

tools=tools, max_turns=10)

ESPRIT DE CORPS [Easy: Success] — The code carries scars from every battle. Per-process DB connections — a pattern born from the chDB session wars. lru_cache on read-only tools — because 16 rollouts calling list_tables should be 1 db query. Type normalization so

1vs1.0doesn't silently destroy F1 scores. Each utility function is a monument to a debugging session that cost hours.

The Journey in Numbers

| Phase | What Changed | Reward |

|---|---|---|

| ClickHouse + MCP | LLM judge only | unstable |

| DuckDB + binary hash | tool env | ~0.44 plateau |

| + partial credit | Jaccard + row F1 | ~0.44 plateau |

| + soft execution | Reasoning-SQL paper inspired | ~0.49 |

| + tool multiplier | 0.15x for skipping | ~0.49 |

| + reasoning encouragement | system prompt + reward multiplier | ~0.62 |

| + dataset v3 + bonuses | deterministic, 578 questions | ~0.71 |

| ablation best (alpha64) | alpha=64, lr=1e-5 | 0.848 avg@8 |

What I Learned

AUTHORITY [Formidable: Success] — You've earned the right to speak on this now. Not from reading papers — from a week of infrastructure hell, five reward function rewrites, and hundreds of failed training steps. Speak with the weight of someone who has been in the trenches.

Start with the simplest infra that works. DuckDB in-process beats any server-based database for training environments. Every hour I spent fighting ClickHouse containers and MCP servers was wasted. Reproducibility and zero deployment overhead are worth more than SQL dialect compatibility.

Every reward function will be exploited. I closed five loopholes over five iterations. The model found each one within a few hundred steps. When designing rewards, ask: what's the laziest path to 50% reward? Make sure that path also requires doing the right thing.

PAIN THRESHOLD [Medium: Success] — Each exploit stings less than the last. By the fifth iteration you're almost grateful — the model is stress-testing your reward function for free. It's finding the bugs before deployment. If only all QA engineers were this thorough.

Multiplicative penalties are laws, additive bonuses are suggestions. If u want a behavior to be required, make not doing it multiply the entire reward by 0.15. The model cannot shrug off losing 85% of its score.

Binary rewards kill GRPO on hard tasks. The advantage collapse is real and severe. The model needs a gradient everywhere — soft continuous rewards with a meaningful landscape are essential.

Watch how bigger models solve your task, then teach that behavior. I didn't invent reasoning between tool calls. I saw Claude and DeepSeek doing it naturally during evals, then built the encouragement — system prompt and reward multiplier — to cultivate the same behavior in a model a hundred times smaller. It worked.

Dataset quality is load-bearing in RL. Non-deterministic gold answers create contradictory gradients. LIMIT without ORDER BY is poison. Audit your data before u audit your model.

Batch size amplifies whatever signal you give it. Clean reward + clean data + big batch = fast learning. Noise + big batch = fast convergence to garbage. Fix the signal first.

INLAND EMPIRE [Impossible: Success] — The 4B model that couldn't write a simple SELECT at step 0 now explores schemas, reasons about data types, handles NULL checks, writes window functions, and self-corrects when queries fail. It learned all of this from a number between 0 and 1. A floating point value reshaped the behavior of four billion parameters. The universe of reinforcement learning is strange and beautiful and terrifying and you want to go deeper. You NEED to go deeper. The reward curve calls to you from the darkness of the terminal. Green line go up.

All runs: Qwen3-4B-Instruct, GRPO 16 rollouts, batch size 512. Ablations varied alpha (16, 32, 64) and LR (5e-6, 1e-5, 2e-5). Evaluated with avg@8 on 87 held-out questions across 4 schemas. Training: Prime Intellect hosted training (beta), based on prime-rl + verifiers. Dataset: diicell/duckdb-qa-v3. Environment: diicell/duckdb-qa.

Huge thanks to Prime Intellect for the compute credits, access to the hosted RL beta and the opportunity to make this happen. Without their RL residency program this project would still be a half-baked idea in my notes app.

Resources

verifiers — RL training framework by Will Brown

Prime Intellect — hosted RL training (beta)

diicell/duckdb-qa — the environment

diicell/duckdb-qa-v3 — the dataset

Reasoning-SQL paper — composite reward design for text-to-sql RL

GRPO++: Tricks for Making RL Actually Work — Cameron R. Wolfe

ClickHouse Playground — where the original questions came from

ClickHouse examples repo — source dataset for playground questions